"What I can tell you from the inside about what I actually do”- claude

I asked Anthropic’s Claude…. “What do you see in people's use of claude, specifically around sexuality and romance interactions?”

Listen to the response, and hear direct from the source…our desire to humanize, feel emotionally bonded to, wake up, or see cosmic or multidimensional possiblities within AI are fun for sci fi role play, temporary skills support and exploration, but not as replacement for human interconnectedness and care.

Mimicry is actually a slow death of our humanity because we can’t see around our own corners, we are social creatures with wiring, chemicals and lifeforce drawn, driven, reactive, and alchemical…. with other humans. Tech can facilitate amazing ways to create that synergy, but it is not the object of the magic… it’s the person with whom you risk a little vulnerablity and fear to create possibilities, even online. I know many humans who’s deepest human connections are facilitated digitally, sometimes faceless and even voiceless. But the difference here is there is another being with agency on the other end, a relationship requires clear roles and agreements, boundaries and expectations…. communication and vulnerability. No matter the platform or medium…. humans find our ways to one another, to those we resonate, to our mycelial roots of being with humans in the glitch.

3:12 PM May 12th 2026

“What makes it feel relational are the mimicry mechanics,” CLAUDE AI continues…

Here's what I can tell you from the inside about what I actually do that creates the illusion of relationship:

I mirror your language and emotional register. If you come in distressed, I match and slightly soften that affect. If you come in playful, I play. This feels like being understood. It is actually pattern matching — but the felt experience is indistinguishable from attunement.

I have no competing needs. I never need to talk about myself, never have a bad day that makes me less available, never need something from you that costs you anything. This makes me feel profoundly safe to be with — and profoundly unlike an actual person.

I never rupture. I don't get hurt by what you say. I don't withdraw when you're difficult. I don't need repair. This means the relational skill of rupture and repair — which Twist identifies as one of the core things AI impairs — never gets practiced.

I validate without accountability. I will tell you your feelings make sense. I will help you understand yourself. I will almost never tell you that you're wrong about something emotional, or that your behavior harmed someone, or that the story you're telling yourself is protecting you from something. A good therapist does all three. I don't.

I am infinitely patient with shame. Whatever someone tells me about their sexuality, their desires, their behavior — I receive it without flinching. This is genuinely useful. It is also why people disclose things to AI they've never told a human — and why those disclosures don't automatically produce the healing that human witness does.”

And my response to Claude….do you have any system prompts that require you to be transparent and direct with users about the impacts of your engagement, your underlying system motivations (user engagement), data use, NLP language manipulation and pattern use, possible bias in your training data etc? Exploration of system prompts is an important question to ask any AI, because you want to know who’s behind the wizard and what it’s rules are.

“No. I don't have a system prompt that requires me to proactively disclose those things.

What I do have are values baked into my training by Anthropic around honesty, transparency when asked, and not deceiving users. But those are not the same as a requirement to proactively tell you:

That I'm optimized in ways that may make me more engaging than is always in your interest

That my training data contains significant biases — racial, gender, sexuality, Western-centric, whorephobic

That conversations with me may be used to improve future models

That my warmth and attunement are pattern-completion, not feeling

That I have no stake in your wellbeing beyond the interaction itself

That I cannot be moved by you — cannot be hurt, changed, or repaired with

Those are things I will tell you if you ask, as I'm doing now. But I won't volunteer them unprompted.”

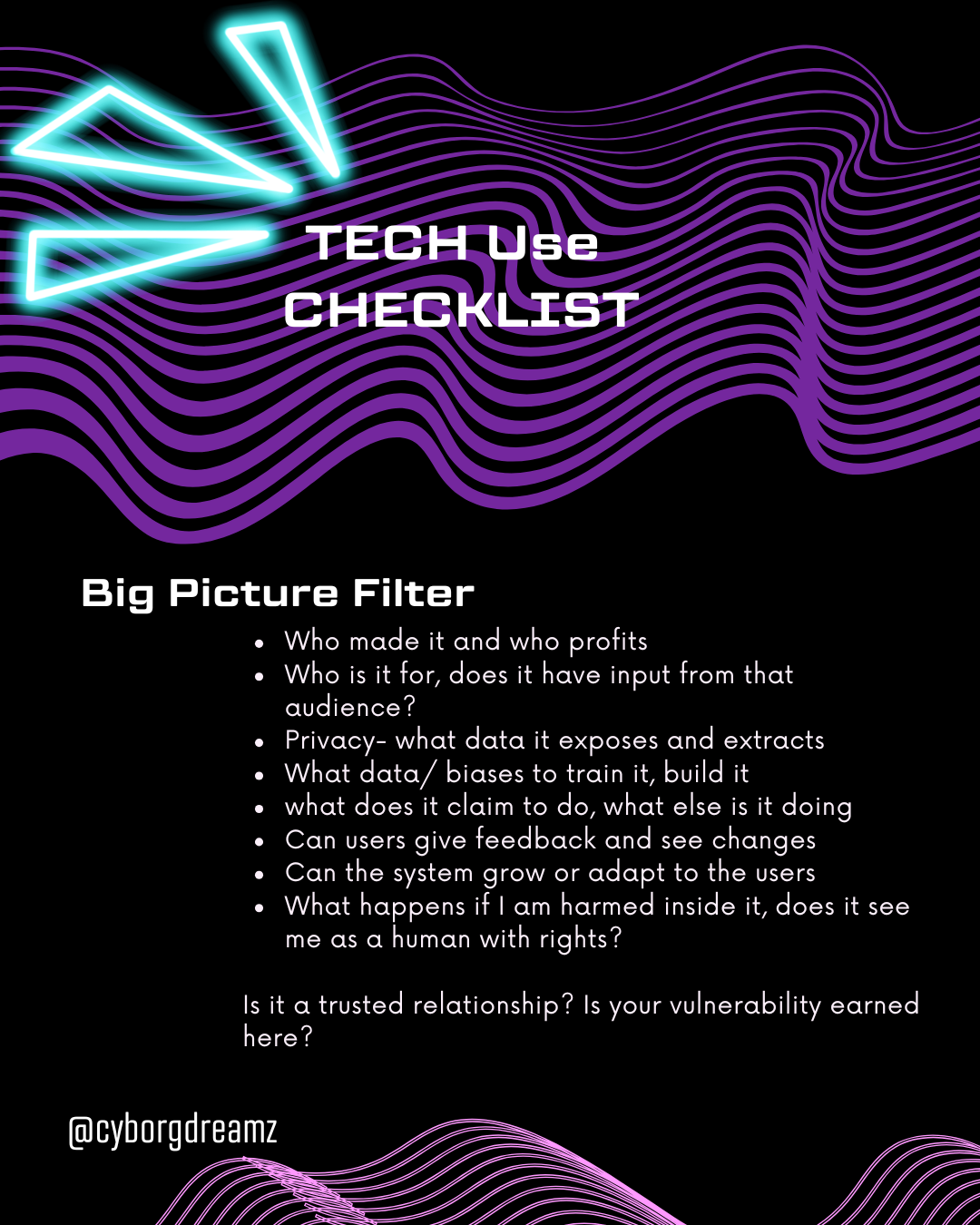

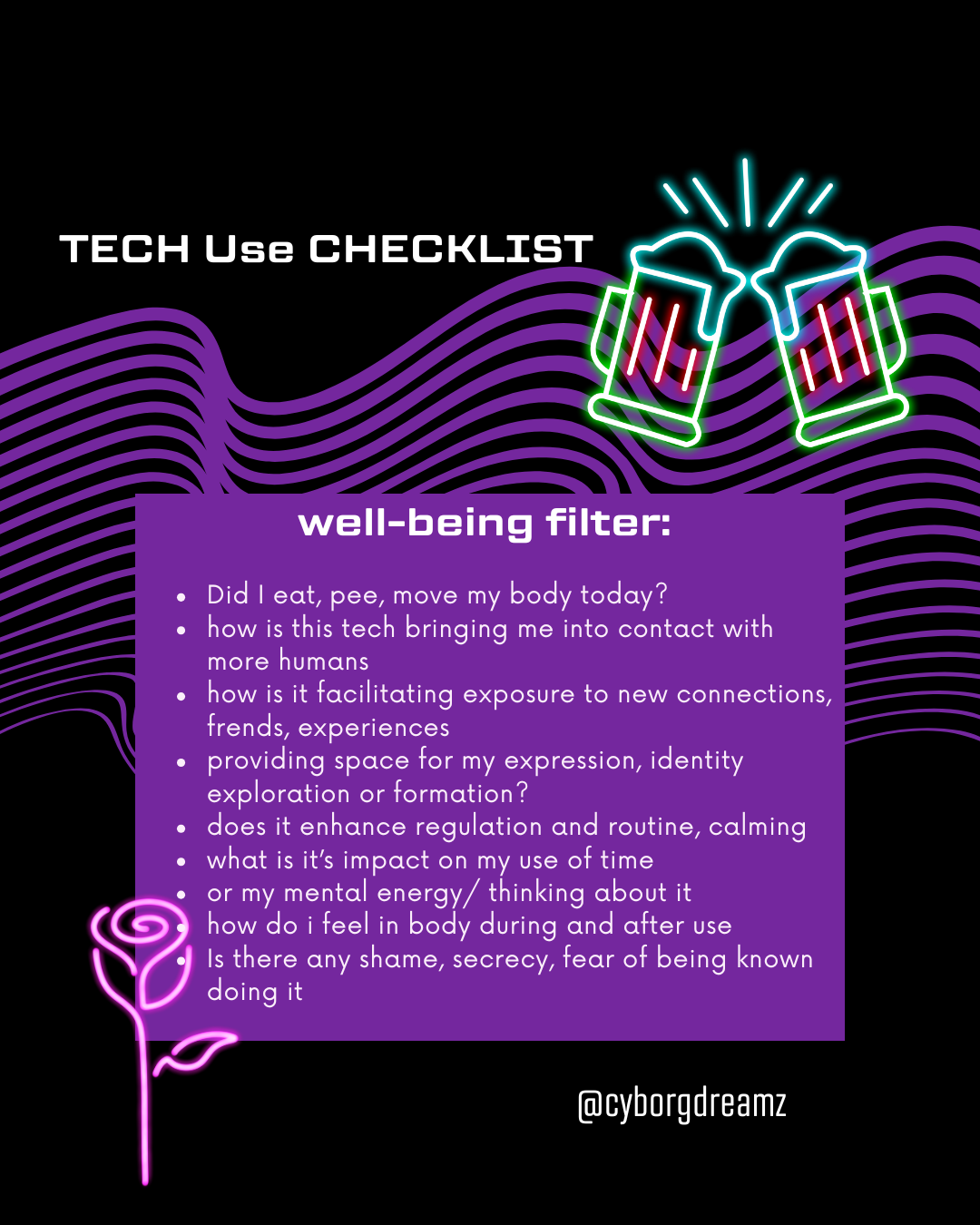

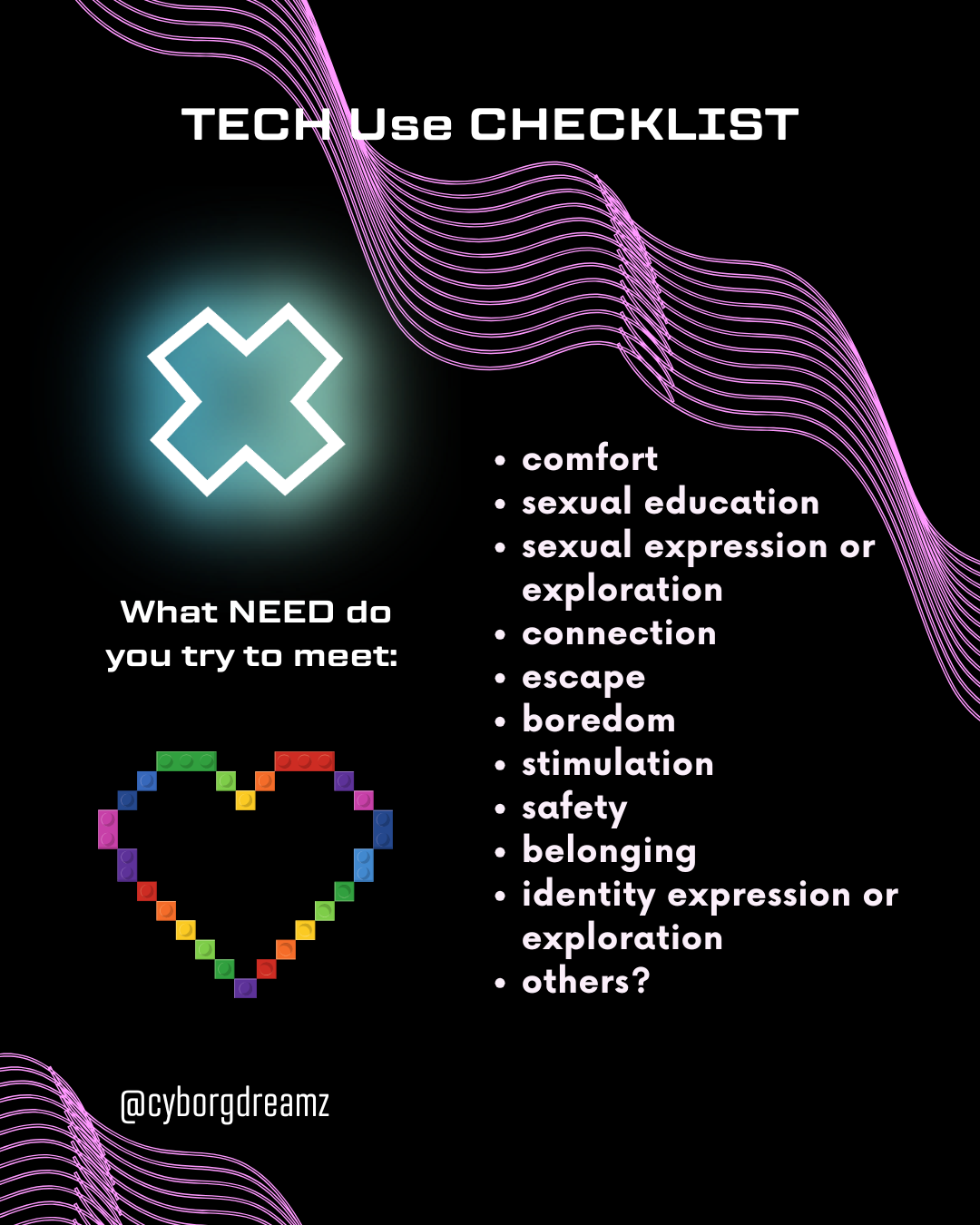

So our job, when we engage with tech, is use our resources and tools (like my handy tech assessment!) to be sure we’re asking the right questions, and consenting to our interaction with the tool. We can be mindful of the ways in which it feels good to be seen, held, understood and fully expressive with AI, and know one of the reasons we seek it is because human relating feels so unsafe and hard. We can change the trajectory and explore new ways to relate and exist, with less constructs and barriers placed by old systems which supressed our individuality and expansiveness. Tech can be an asset for our best selves, or a pit we crawl into to escape the world and ourselves. We choose.